Over half (56%) of IT decision makers surveyed feel that their personal data is less secure now than 5 years ago

- Almost 9 in 10 (87%) feel forced to share an increasing amount of personal data

- 94% feel that increased regulation is needed to control what voice assistants, such as Google, Siri, and Alexa, are allowed to listen to and collect

Back in 2018, a Forbes article estimated that 90% of the world’s data had been generated in the two years prior – an extraordinary claim at the time, and a figure that is likely even greater today with the continued growth of the Internet of Things (IoT). Fast forward five years, and while the Internet of Things (IoT) is the fastest-growing data segment, social networks are close behind. But where do you store all the data? Who has access to it? How do you protect it? With so many questions, it’s easy to feel overwhelmed and paranoid about how organizations use our data.

We are all responsible for adding to this large collective of data, whether knowingly or not. Each time we browse the internet, we leave behind a digital trail that organizations might store and use for decision-making. Expanding our digital footprint by consciously creating and sharing digital identities through social media, online discussions, electronic applications, and browsing gadgets.

Data collection is not limited to consumers. Businesses are increasingly relying on the data of their competitors, employees, as well as customers, to feed into the Big Data sets that are now becoming too complex for traditional data processing applications to handle. Increasingly, large language models are taking our data and using it to recognise, predict or even generate content.

We thought we’d take the opportunity to reach out to our Vanson Bourne Community of IT professionals to get their thoughts on data, from both a consumer and ‘insider’ point of view, and whether the aforementioned concerns might be justified.

Do IT decision makers feel their data is safe, and do they care?

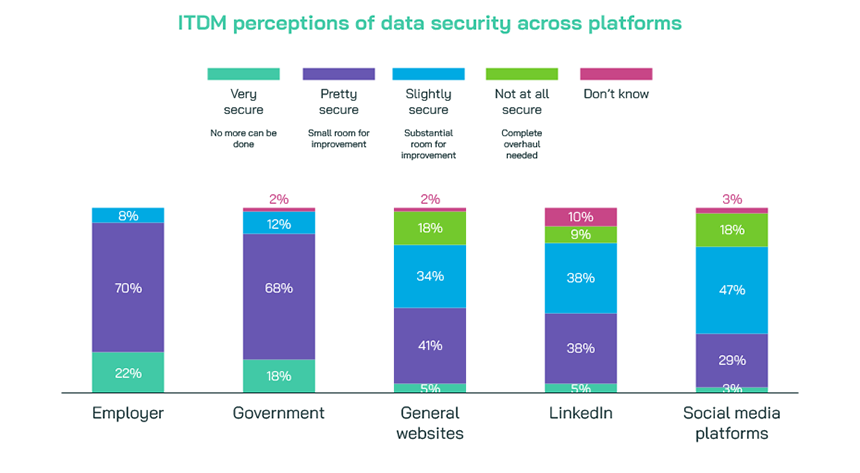

IT decision makers feel their data is most secure with their employer and least secure with social media platforms and websites. The lengthy regulations and protocols protecting our personal and professional data held by employers are reassuring. This validation makes reading all those policies and documents worthwhile.

Professional networking sites however didn’t go unscathed. Almost 1 in 10 (9%) surveyed feel that LinkedIn as a platform needs a complete overhaul of its security process. As one of the top recruitment resources in the UK today, it’s surprising so many feel their data is at risk with LinkedIn but not with their employer, who can access their details from the platform.

UK ITDMs recognize their responsibilities in securing data despite needing improvements in security processes across platforms. Their insider perspective provides valuable lessons for others.

Recent events have driven an acceleration in digital transformation. Latest AI developments promise a platform shift like the cloud or internet, leading to extensive digital data collection. Most entities have data security procedures, but the sophistication and protection levels vary greatly across organizations and borders. The European Union’s General Data Protection Regulation (GDPR) is considered one of the toughest privacy and security laws globally. It protects data belonging to its citizens and residents, and applying to organizations that process such data regardless of their location.

These measures are, in part, aimed at improving our confidence in the privacy and security of our data, yet their impact appears somewhat muted with just over half (56%) of the ITDMs we interviewed feeling that their personal data is less secure now than 5 years ago, while 39% felt this was not the case and 5% didn’t really know. Despite few breaches making headlines like Yahoo, LinkedIn, and Marriott, impacting billions, data breaches are more common than we think.

So, what if the worst were to happen?

All the ITDMs interviewed expressed concern about their data being leaked. This makes sense from a consumer point of view – after all, these same ITDMs are consumers themselves. But aren’t they also those responsible for securing our data in the first place? Who should we hold accountable when data leaks?

Evidently, we shouldn’t solely blame the ITDMs, who are responsible for securing our data. At least not in their eyes. While ITDMs accept responsibility for data leaks, short of blaming the attackers themselves 86% of respondents feel the company (i.e., Instagram or Facebook) is at least partially to blame for social media data attacks. Similarly, over 8 in 10 ITDMs felt that online websites (including news and shopping sites), LinkedIn, or even government sites (storing data relating to pensions, or tax information) were themselves to blame. Only employers fared slightly more positively, with 79% blaming them for a related data leak. This may be due to insufficient tools, security protocols, or investment in cybersecurity available to ITDMs.

We might need more research to delve deeper into blame, but is that really the point here? If data breaches are out of our control but we still need to share data, do we retain any autonomy? With 87% of those interviewed feeling forced to share an increasing amount of personal data, we may not like the answer to that question!

The increasing use of AI and machine learning looks set to continue that data dilemma. The vast majority (93%) of ITDMs interviewed have had exposure or experience in some capacity with these technologies. However, 92% feel that these tools and programmes are not keeping their data fully secured. Clearly cybersecurity needs to remain at the forefront of organisations with access to our data.

Are humanoid or autonomous robots any safer?

Humanoid robots are designed to mirror human behaviour, like Frankenstein’s creation. They often feature human-like facial expressions and features. Are they the modern era Frankenstein? Typically, these robots can perform human like activities such as running, jumping, and carrying objects.

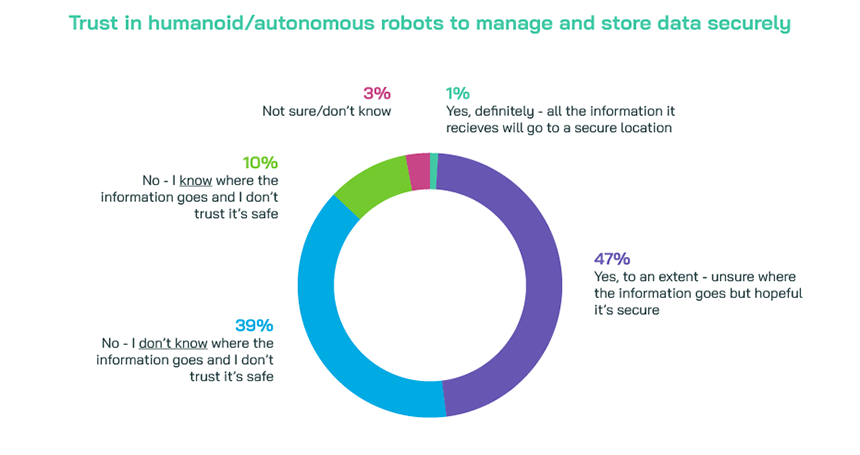

An extension of this is autonomous robots that operate independently from human operators. They use sensors to perceive their environment, such as cleaning or hospitality bots. Both have seen a growing interest in the last few years with 89% of our ITDMs surveyed reporting an experience with one, 19% of which have interacted or used one. However, only 1% of decision makers feel the information these robots use is stored in a secure location. Additionally, just under half (47%) are hopeful the data is secured protected, but 49% don’t trust it’s protected safely.

This begs the question – why are ITDMs, a tech savvy group, interacting or exposing themselves to potentially unsafe technology? Perhaps it’s due to the many (62%) who feel these robots are the future. Technological advancements in AI, ML, and robotics are increasing our world’s independence and complexity. Impacting many roles as they shift from humans to machines. Three-quarters of ITDMs surveyed feel AI technology, such as Microsoft’s Copilot or ChatGPT, will disrupt the administrative job market. They believe it will replace human employees with this technology. Is the future of jobs no longer human?

Hopefully the fate of AI, and robots, won’t end as disastrously as Frankenstein’s story of raising the dead. We can only hope tech engineers and designers create secure data protection processes to eliminate data breach opportunities. Notably, a fate just as scary as Frankenstein coming to life!

Organizations should compensate data subjects directly for breaches by paying the financial penalties. 91% of the ITDMs surveyed agree that organisations should be legally forced to financially compensate individuals for breaches involving their personal data. Almost all (94%) feel that increased regulation is needed to control what voice assistants, such as Google, Siri, and Alexa, are allowed to listen to and collect.

So why do we need so much data?

Data is crucial to an organization’s success, and we firmly believe in its paramount importance. Research conducted by McKinsey suggests that companies who strategically use data, such as consumer behavioural insights, to inform their business decisions outperform their peers in sales growth by 85%.

It’s clear that concern around the data organisations hold is at the forefront for professionals and consumers alike. Most ITDMs we’ve interviewed, who are consumers thsemselves, feel increasing pressure to share more personal data with organizations. Organizations should regularly review and update security policies to protect collected data, considering what is collected, why it is collected, how it is stored, its duration, and its protection. These measures ensure they avoid data leaks and the resulting financial or legal implications. A company won’t want to make the headlines for the wrong reasons!

More importantly, by safeguarding data effectively, organizations can earn the trust of data owners, encouraging them to share more information. With data an increasingly valuable commodity, trust in a brand can have a significant impact in its success. And to build trust? Organisations need to be accountable and commit to understanding their audience.

Authentic messaging that reflects audience values significantly builds brand trust. Vanson Bourne has a wealth of experience helping brands refine their messaging through understanding the impact that key individual elements of a message have on audience appeal. In a separate survey of 300 B2B marketers, strategists, and insight professionals, from the US and UK, 95% told us their organisation is conducting (or has plans to conduct) message testing.

Get in touch to find out more about our message testing capabilities, and how we can support your other research requirements.

Methodology

100 UK IT decision makers from the Vanson Bourne Community were interviewed in October 2023.